Resource Efficiency and AI

9/20/2025

Resource Efficiency and AI

Introduction

If you follow discourse around artificial intelligence and LLMs (large language models), chances are, you've heard claims about their enormous resource demands (If you haven't, see below!). Let's take a close look at some of those claims and how we can address worries surrounding resource consumption. Since a wide variety of factors come together to create and power LLMs, we'll limit the scope of this post to two big categories: energy and water. After some background on these inputs, we'll explore possible avenues for reducing consumption.

Water Usage

Often overlooked, water plays a crucial role in facilitating cloud infrastructure and thus our modern AI applications. Projections for the global water withdrawal for AI usage in 2027 range from 4.2–6.6 billion cubic meters, about half the water withdrawal of the U.K. 0.38–0.60 billion cubic meters of that water will be evaporated or consumed. To contextualize this measurement for a WCU student, the lower end of that range is greater than total amount of drinking water distributed in Philadelphia for a year12. Furthermore, aggressive projections for AI water withdrawal in the U.S. alone for the year 2028 exceed the 2027 global estimates13.

1

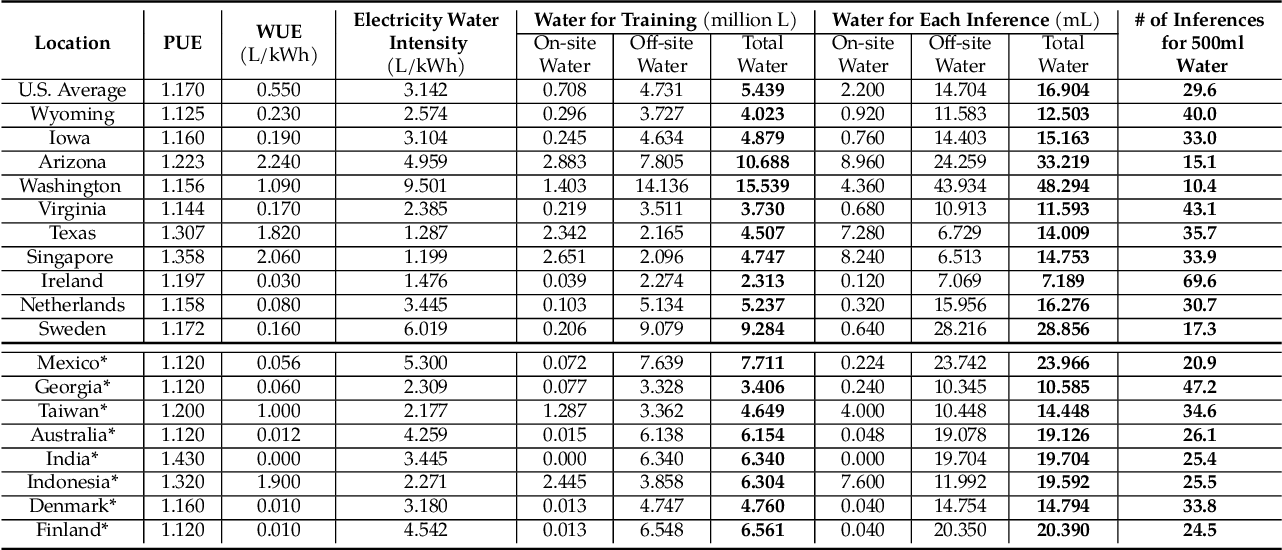

Estimate of GPT-3's average operational water consumption footprint. '*' denotes data centers under construction as of July 2023. PUE/WUE denotes Power/Water Usage Effectiveness. EWIF denotes Electricity Water Intensity Factor.

1

Estimate of GPT-3's average operational water consumption footprint. '*' denotes data centers under construction as of July 2023. PUE/WUE denotes Power/Water Usage Effectiveness. EWIF denotes Electricity Water Intensity Factor.

Energy Usage

Water and energy usage are deeply interconnected, so we'll turn to that next. To best understand the relation between the two, let's look at the electricity projections for the same years. Estimates for AI's global electricity usage in 2027 range from 85–134TWh1. To provide another local comparison, the bottom of that range is 5 times more energy than all of Philadelphia county consumed in 20244. The same study used to forecast the 2028 U.S. water withdrawal figure places the country's AI electricity consumption for the year at a range of 150–300TWh13. To narrow the context and provide insight into a particular operation, the GPUs that trained GPT-3 used rivaled the monthly consumption of about 1,450 U.S. homes5. Regardless of whether you focus on the lower or upper bound of estimations, these figures underscore the colossal scale of what goes on behind the scenes of some of today's most well known apps. In order to understand how things can be improved, let's identify some of the biggest contributing factors.

Background

We've seen now how resource-intensive the modern AI ecosystem can be, but where does all the water and energy go? Considering the massive scale of AI applications, we can start at a high level and identify shortcomings with system-level infrastructure (data centers). Referring back to the chart under the "Water Usage" section, we can identify the U.S. data centers with the highest "WUE," indicating low efficiency in on-site (cooling) water usage. When sorted by this attribute, the three least efficient data centers are the ones in Arizona (2.240), Texas (1.820), and Washington (1.090). With this grouping, it's clear that data centers in hot, arid places require more water for cooling. This geographical placement may seem slightly perplexing; of course hotter areas are harder to cool! There are, however, other considerations when constructing new data centers. Aside from on-site water usage (and business incentives), power infrastructure also plays a role and can offer some tradeoffs with the off-site water usage. When selecting data centers by EWIF, Texas drops out of the top three, possibly due to the availability of wind and solar energy. Considering both WUE and EWIF, it becomes clear that optimizing water usage isn't as simple as doing all computations in a cold climate.

Going down a level, we can consider the performance (operations per second) required to train and run sophisticated models. This power comes from GPUs. While they are performant, tasks typically do not utilize them to their fullest potential. Despite techniques to address this underutilization, across global data centers, average GPU use ranges from 30-50%5. Another study finds an even lower rate (18%) in one production environment6. From this insight, researchers deduce that a considerable amount of energy goes toward these processors sitting in an idle or stalled state5.

This underutilization draws attention to a more systemic issue: In modern processors, data must travel between a memory unit and a compute unit. Since the compute units must wait for data to arrive, a bottleneck arises based on how fast the data can be accessed (the von Neumann bottleneck)7. While alternative architectures are being researched, we can also address this bottleneck in how we manage resources at the software level. One major component of this challenge is memory management. By optimizing memory usage, we can reduce stalls, increase throughput, and ultimately alleviate some energy demand.

Memory Organization

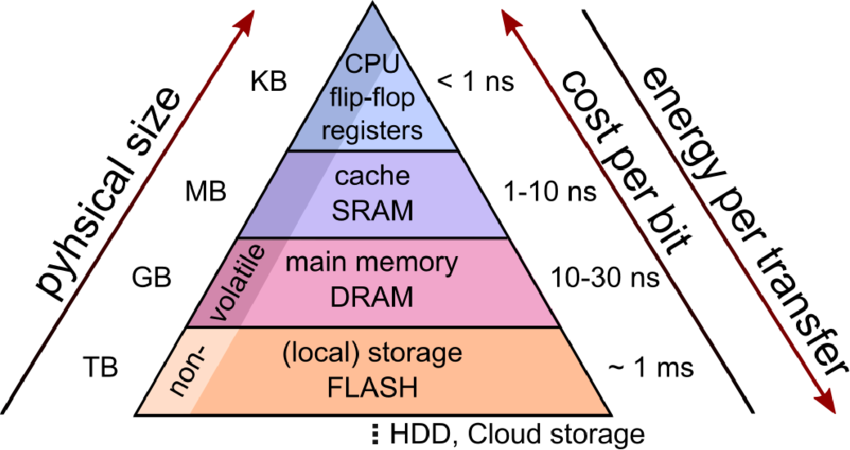

To better understand how memory management factors into performance, let's start by taking a look at how a computer organizes data. We can divide memory into two different categories: primary (internal, volatile) and secondary (external, non-volatile). The diagram below shows the different tiers of available memory, ordered by size and access time. Registers, caches, and main memory (dynamic random-access memory) fall under the primary category. They store programs and data for the processes your computer is currently running (like this webpage!) and are directly accessible to the processor. On the other hand, things like HDDs (hard drive disks) and SSDs (solid-state drives) constitute secondary memory. They are used for long-term storage, retain data even when unpowered, and are indirectly accessible to the processor through input/output operations. As you can see in the diagram, access time for secondary storage jumps to the scale of milliseconds, whereas primary storage can be accessed in nanoseconds. Importantly, it also indicates the direction of cost per bit, a monetary measurement (primary storage is more complex to physically produce). Taking these attributes into consideration, we can see that efficient design necessitates a balance between the two categories.

Mitigating Resource Consumption

So far, we've identified a couple key factors contributing to water and energy usage in AI, namely the at-scale infrastructure supporting data centers and the underutilization of compute units in current hardware. Researchers are handling these inefficiencies at multiple levels of the technology stack. Let's look at some examples at each level.

Hardware Advancements

Any kind of hardware used to speed up operations for AI could be classified as an AI accelerator. The most widely used accelerator is the GPUs. GPUs increase performance through parallel processing, enabled by having multiple logic cores. While performant, they were not designed specifically for AI datasets and thus leave room form improvement, as previously identified. At a high level, newer accelerators such as NPUs and TPUs (Neural/Tensor Processing Units), allow for more efficient computing by prioritizing access to primary, on-chip memory and show definite improvements compared to GPUs5.

Software-Level Optimizations

Although hardware capabilities continue to increase, it remains important to write software that not only fully utilizes the hardware's capabilities but also doesn't waste those resources. There are a variety of ways in which programs handle memory inefficiently. One method called mixed-precision training cuts down on memory usage by reducing the precision of values in certain operations. This change might look like using 16-bit values instead of 32-bit ones -- a 50% reduction in size! This simple adjustment, when applied strategically, dramatically increase performance with only minimal losses to accuracy9. Aside from reducing wastefulness, we can also more fully utilize compute units that would otherwise be idle through a method called gradient checkpointing. When a program plans to reuse previously computed data, it can save time storing only a subset of data points, which are more quickly accessible and enable the processors to redo the calculation rather than wait for the data to arrive 5.

Operational Improvements

As previously discussed, some data centers consume resources more efficiently than others. While future constructions may be designed to consume less resources, current builds can be used in ways that optimize their water-consumption rates. Things to consider here include how the environment's temperature changes throughout the day or when renewable energy is most plentiful. Strategic scheduling can account for conditions like these and run when cooling and energy footprints are lower. Current usage patterns leave much room for improvement5.

Conclusion

In this post, we identified the von Neumann bottleneck, a current limitation in conventional computer architecture, as well as inefficiencies in the way we use our computing resources. With such a wide array of optimizations, it's clear that significant improvements can be made to our resource consumption. The various levels at which contributions can be made draw from fields within computer science and beyond. From the physical reactions that form the basis of computing to the governmental policies that regulate its deployment, efficient computing is a truly interdisciplinary problem that will require input from all parts of society.

Opportunities for Future Work

Although this post doesn't look deeply into any technical details, it provides a good foundation to start doing so and could be the start of a series on efficient computing. Having identified some broad areas with potential for improvements, it could be helpful to more closely examine a couple methods that were described and see how they work.

References and Links

Footnotes

-

Li, Peng et al. “Making AI Less 'Thirsty'.” Communications of the ACM 68 (2023): 54 - 61. ↩ ↩2 ↩3 ↩4 ↩5

-

Philadelphia Water Department. Resource Recovery & Energy Production. Philadelphia Water Department, https://water.phila.gov/sustainability/energy/. ↩

-

Shehabi, A., Smith, S.J., Horner, N., Azevedo, I., Brown, R., Koomey, J., Masanet, E., Sartor, D., Herrlin, M., Lintner, W. 2016. United States Data Center Energy Usage Report. Lawrence Berkeley National Laboratory, Berkeley, California. LBNL-1005775. ↩ ↩2

-

Philadelphia County, Pennsylvania Electricity Rates & Statistics.” FindEnergy, FindEnergy LLC, 31 July 2025, https://findenergy.com/pa/philadelphia-county-electricity/. ↩

-

Makin, Yashasvi, and Rahul Maliakkal. “Sustainable AI Training via Hardware–Software Co-Design on NVIDIA, AMD, and Emerging GPU Architectures.” arXiv, preprint arXiv:2508.13163, 28 July 2025, https://arxiv.org/abs/2508.13163. ↩ ↩2 ↩3 ↩4 ↩5 ↩6

-

Weng, Qizhen, et al. “MLaaS in the Wild: Workload Analysis and Scheduling in Large-Scale Heterogeneous GPU Clusters.” NSDI ’22: Proceedings of the 19th USENIX Symposium on Networked Systems Design and Implementation, 4–6 Apr. 2022, Renton, WA, USENIX Association, https://www.usenix.org/system/files/nsdi22-paper-weng.pdf. ↩

-

Hess, Peter. “Why a Decades Old Architecture Decision Is Impeding the Power of AI Computing.” IBM Research Blog, 10 Feb. 2025, research.ibm.com/blog/why-von-neumann-architecture-is-impeding-the-power-of-ai-computing. ↩

-

Zintler, Alexander. (2022). Investigating the influence of microstructure and grain boundaries on electric properties in thin film oxide RRAM devices – A component specific approach. 10.26083/tuprints-00021657. ↩

-

Micikevicius, P., Narang, S., Alben, J., Diamos, G., Elsen, E., Garcia, D., Ginsburg, B., Houston, M., Kuchaiev, O., Venkatesh, G., & Wu, H. (2018, February 15). Mixed precision training. arXiv.org. https://arxiv.org/abs/1710.03740 ↩